YouMVOS: An Actor-centric Multi-shot Video Object Segmentation Dataset

4. University of Oxford 5. ByteDance Inc. 6. Brown University

We are now replacing these videos and the new dataset will be released soon.

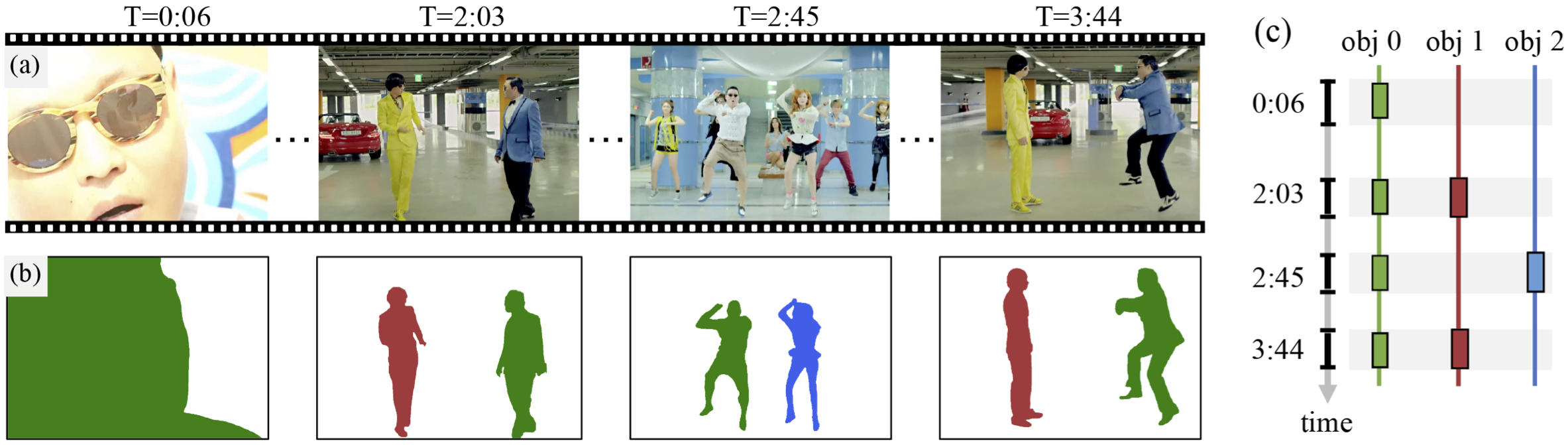

Multi-shot video object segmentation (MVOS). In multi-shot videos, MVOS aims to track and segment selected recurring objects despite changes in appearance (e.g., person in green masks) and disconnected shots (e.g., person in red masks). We show sample (a) frames, (b) segmentation masks, and (c) timelines for the Gangnam Style video in our dataset.

Abstract

Many video understanding tasks require analyzing multi-shot videos, but existing datasets for video object segmentation (VOS) only consider single-shot videos. To address this challenge, we collected a new dataset---YouMVOS---of 200 popular YouTube videos spanning ten genres, where each video is on average five minutes long and with 75 shots. We selected recurring actors and annotated 431K segmentation masks at a frame rate of six, exceeding previous datasets in average video duration, object variation, and narrative structure complexity. We incorporated good practices of model architecture design, memory management, and multi-shot tracking into an existing video segmentation method to build competitive baseline methods. Through error analysis, we found that these baselines still fail to cope with cross-shot appearance variation on our YouMVOS dataset. Thus, our dataset poses new challenges in multi-shot segmentation towards better video analysis.

Example Video